Last updated on February 10th, 2026 at 10:29 am

In notebooks, machine learning frequently appears simple, but when data grows beyond a single computer, it becomes a very different field.

This course directly fills that gap. For students who already know the fundamentals of data and wish to experience how machine learning truly functions at scale, Machine Learning with Apache Spark was created.

As you progress through the course, the emphasis moves from isolated algorithms to practical workflows—how Spark’s distributed architecture is used to prepare, transform, and feed data into models.

You work with Spark ML pipelines, structured data processing, and real-world use cases that replicate what data engineers and ML practitioners meet in real-world settings rather than abstract theory.

This course’s balance is what makes it so beneficial. It is neither merely conceptual nor does it attempt to make you a machine learning specialist with a research focus.

Rather, it demonstrates how machine learning easily fits into large-scale data systems, assisting you in understanding not only how models are constructed but also why Spark is frequently the preferred tool when reliability, speed, and volume of data are important.

This course offers a solid and useful foundation for anyone who wants to work with big data-driven machine learning systems.

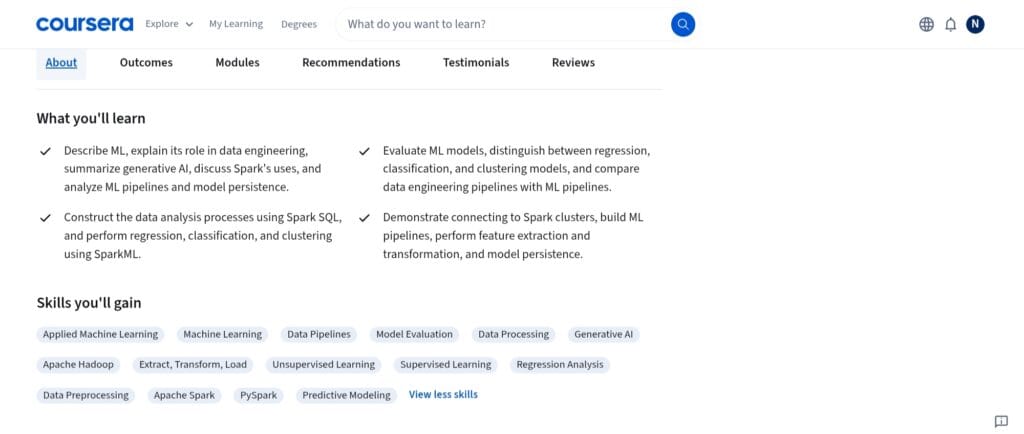

What skills will you learn in this course?

After completing this course, you will have a well-defined set of practical skills that lie at the nexus of large-scale data engineering and machine learning.

The course teaches you to think about end-to-end workflows that can function on distributed systems rather than considering models in isolation.

You learn how to create and use machine learning models using Apache Spark ML, including approaches for clustering, regression, and classification.

More significantly, you comprehend how Spark manages computation across a cluster and when and why these models are employed in huge datasets—a feature that is sometimes absent from conventional machine learning courses.

A substantial portion of the curriculum is centered on data preparation and feature engineering with Spark.

You get practical experience handling structured data, processing raw data using Spark SQL, and creating features that can be applied to several models.

Since real-world machine learning work focuses much more on data readiness than model selection, this ability alone is extremely essential.

Designing machine learning pipelines is another area where the course develops excellent proficiency.

You discover how to combine model training, evaluation, and data transformations into repeatable Spark machine learning pipelines, as well as how to save and reuse them.

This illustrates how machine learning systems are developed in production as opposed to in lab notebooks.

Lastly, you learn how to work with distributed systems, comprehend Spark architecture, and incorporate streaming or batch data into machine learning processes as you gain practical data engineering and scalability skills.

When combined, these skills provide you with the confidence to work with scalable machine learning systems, which is a necessary talent for modern data and ML professions.

Read Also: Will AI take over data science jobs? A balanced perspective

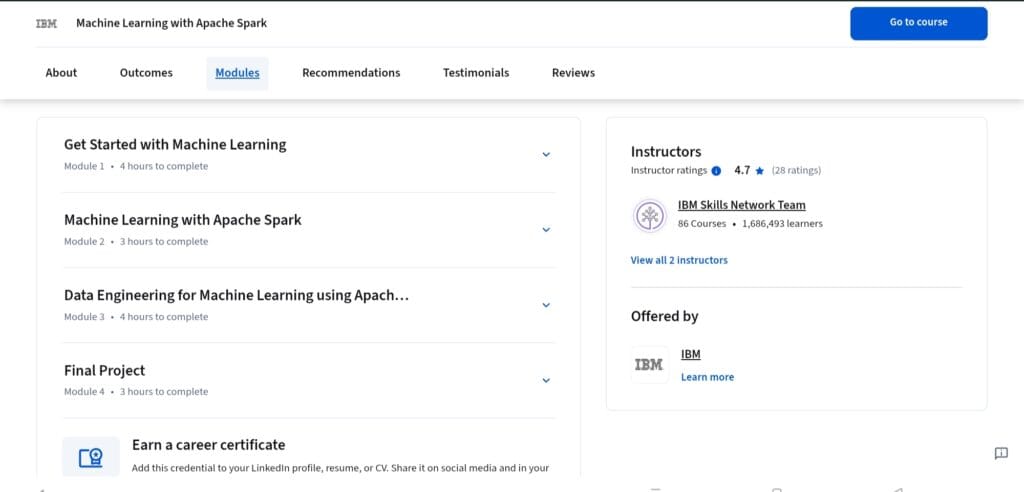

What concepts are taught in the Machine Learning with Apache Spark course?

Through a practical, systems-oriented lens, the course presents machine learning techniques, emphasizing their use when data and computation are distributed rather than confined to a single computer.

The foundations of machine learning, such as supervised and unsupervised learning, regression, classification, and clustering, are where you begin.

These ideas are reexamined as building blocks that must function effectively on massive datasets rather than as abstract theory.

The course places a strong emphasis on comprehending assessment metrics, model behavior, and the normal lifecycle of a machine learning solution.

Apache Spark and Spark ML architecture are essential to the program. You discover how Spark ML varies from conventional ML libraries, how distributed computing affects model training and performance, and how Spark handles data across clusters.

Ideas like DataFrames, resilient distributed datasets, and Spark’s execution architecture are presented with obvious applications to machine learning tasks.

Additionally, the course covers data engineering concepts for machine learning, such as Spark SQL data transformation, feature extraction, and ETL workflows.

You discover how clean, organized data enters machine learning pipelines and why inadequate data engineering frequently acts as a bottleneck in practical ML systems.

Machine learning pipelines and workflow orchestration are two additional important ideas. You investigate how models are trained and stored, how transformations, models, and evaluators are integrated into reusable pipelines, and how consistency is preserved throughout deployments and trials.

The course concludes by introducing the ideas of streaming and real-time data processing, demonstrating how Spark Structured Streaming can support machine learning workflows.

This makes it easier to comprehend how machine learning systems can adjust to constantly arriving data, which is becoming more and more typical in production settings.

When combined, these ideas provide a comprehensive understanding of how machine learning functions in scalable, production-ready data sets as opposed to discrete experiments.

Read Also: The Rise of AI in Education: How Non-Tech Professionals Are Embracing the AI Revolution

Who should join this course?

Students who want to go beyond small-scale machine learning experiments and have some experience with data or programming are best suited for this course.

This course explains how machine learning is used when datasets are too big for conventional tools, if you know the fundamentals of Python and data principles.

Data engineers and prospective data engineers who wish to expand their skill set with machine learning capabilities would find it especially beneficial.

The course demonstrates how machine learning (ML) easily integrates with distributed data systems and ETL pipelines, which is a typical requirement in actual data engineering roles.

Data analysts and machine learning professionals who are familiar with simple models but have never worked with Spark can also gain from this.

By bridging the gap between model creation and scalable implementation, the course enables you to comprehend how your understanding of machine learning is applied in real-world settings.

Software developers and backend engineers who work with big data platforms will also benefit greatly from the course.

This course offers practical insight into how machine learning can be integrated without requiring extensive research-level ML skills, whether you are already using Spark or intend to work in data-heavy systems.

However, the speed could be difficult for total novices in data ideas or programming.

The best candidates for this course are those who wish to learn more about scalable machine learning and are getting ready for positions where dependability, performance, and data volume are crucial.

Read Also: Roadmap to Become A Data Scientist In 6 Months (Step-by-Step Guide)

Will you get a job after completing this course?

The course makes it quite clear that you won’t get a job just by finishing it. It does, however, offer something more practical and beneficial: job-relevant competency in a field that many applicants lack—scalable machine learning with big data tools.

After completing the course, you will be more qualified for positions like big data developer, junior machine learning engineer, or data engineer, particularly in teams that use Spark-based ecosystems.

Practical technical interviews and on-the-job assignments closely match the practical experience with Spark ML pipelines, ETL workflows, and distributed processing that employers need.

However, the course is more effective as a career accelerator than a career starter.

This course enhances your resume by demonstrating your ability to deal with production-scale systems and go beyond notebooks if you already have a solid foundation in Python, data processing, or basic machine learning.

It can significantly increase your employability when paired with projects, past experience, or related courses.

To put it briefly, the course does not guarantee employment; rather, it gives you abilities that are immediately useful in real-world positions, which frequently makes a difference when it comes to hiring.

How long does this course take to complete?

The course is intended to be finished in roughly two weeks, assuming a steady and moderate study pace.

You should anticipate spending 8 to 10 hours a week on average, which is doable when combined with employment or other educational obligations.

How much does this course cost?

As we all know, Coursera is a subscription-based platform. So you have to subscribe to this course for a month to access it fully.

Typically, individual course subscriptions on Coursera cost around $39 to $49 per month. The pricing may vary in different locations and currencies due to promotional offers.

Another option to access this course is by joining the Coursera Plus Subscription plan. This gives access to 10,000+ courses and certifications on Coursera for a monthly fee of $59.

Read Also: Best Machine Learning Courses online

Is it worth taking the Machine Learning with Apache Spark course on Coursera?

Yes — and here’s why I’d say it’s worth taking this course on Coursera, based on both the content quality and the practical value you get out of it.

It teaches practical skills, not just theory.

Model accuracy scores are recorded in a notebook at the conclusion of many machine learning courses.

This one challenges you to consider how machine learning functions in actual data contexts, including how to prepare data, organize pipelines, and scale models with Spark.

Many students encounter this gap when attempting to transition from experimenting to actual projects.

You actually work with industry-relevant tools.

Big data and data engineering teams make extensive use of Apache Spark. When employers assess your ability to manage large data workflows, not just simple models, knowing how to use Spark ML and Spark SQL provides you with an advantage.

The hands-on labs and final project matter.

A lot of knowledge is only retained when you do. In order to put you in the shoes of someone who develops pipelines, evaluates models, and presents findings at scale, the course includes hands-on activities that replicate real-world work situations. You can use that experience for your portfolio or to talk about it in interviews.

As a stepping stone, it’s solid.

This course serves a logical need if you want to learn more about data engineering, big data analytics, or scalable machine learning workflows. Although it isn’t a full machine learning path on its own, it significantly advances your skill set in a direction that is in demand.

FAQ

Do I need prior experience with Apache Spark to take this course?

While not essential, a basic understanding of Spark or big data principles is beneficial. Even if you have only used conventional ML tools in the past, you may progressively adjust as you work through the practical labs because the course explains Spark from a machine learning perspective.

Is this course more about machine learning or data engineering?

It fits nicely in the middle. While teaching machine learning ideas, the course focuses heavily on data engineering workflows—that is, how Spark is used to prepare, process, and scale data. It is particularly helpful for real-world applications because of this balancing.

Will I build anything practical in this course?

Yes. The final project, which requires you to create an end-to-end Spark-based machine learning pipeline, puts everything together beyond the smaller labs. It closely resembles the work done by teams in data engineering and machine learning.

How is this course different from regular machine learning courses?

Single-machine models are the main focus of most machine learning courses. Scalability is the main topic of this course, which teaches you how machine learning functions when data is dispersed across clusters—a crucial need in production settings.

Can this course help me prepare for technical interviews?

Yes, indirectly. It improves your ability to talk about Spark ML pipelines, data processing techniques, and scalable ML systems, topics that frequently come up in data engineering and big data interviews, even though it’s not an interview preparation course.

Share Now

More Articles

Applied Machine Learning in Python – A Detailed Review

Machine Learning Specialization by the University of Washington – A Detailed Review

AutoML vs Manual ML: Which One Delivers Better Results (and When)?

Discover more from coursekart.online

Subscribe to get the latest posts sent to your email.