Many advances in modern data science and artificial intelligence are based on neural networks. They are strong computational models that can recognize patterns in data, learn from mistakes, and produce predictions that get better over time.

Neural networks, which draw inspiration from the structure of the human brain, allow machines to evaluate complicated information that is frequently difficult for conventional algorithms to comprehend.

They are now a key component of today’s most sophisticated AI systems due to these capabilities.

Neural networks actually power a lot of the digital technologies that individuals use on a daily basis. Faces, objects, and scenes in photographs can be identified by image recognition systems.

Voice assistants are able to interpret spoken instructions and translate speech into intelligible answers.

On websites like streaming services or online retailers, recommendation algorithms examine user behavior to make product or content recommendations.

Neural networks are used in these applications because they can learn patterns from vast volumes of data and get better over time.

In order to learn contemporary AI techniques, data science students must first comprehend how neural networks work. But initially, the basic ideas may appear daunting.

This guide makes the process simpler by providing a detailed, step-by-step explanation of how neural networks work. You will get a useful grasp of how neural networks learn and make predictions by dissecting the fundamental concepts, such as layers, weights, and learning methods.

What Is a Neural Network?

In artificial intelligence, a neural network, also known as an artificial neural network (ANN), is a computational model that is used to recognize patterns, discover connections within data, and generate predictions.

The system can convert raw inputs into meaningful outputs because it is made up of interconnected components that process information in layers.

A neural network gradually enhances its capacity to identify patterns and resolve challenging issues by modifying its internal parameters during training.

Inspiration from the Human Brain

Neural networks are loosely inspired by how information is processed by the human brain.

Through chemical and electrical signals, billions of neurons in the brain exchange information with one another. The brain can identify pictures, interpret sounds, and gain knowledge from experience thanks to these connections.

This concept is simulated at a basic level by artificial neural networks. They process numerical data using mathematical units rather than biological neurons.

Even though the human brain is significantly more complicated than modern neural networks, the idea of learning through interconnected units is still the same.

Also Read: Best Deep Learning Courses Online [Beginner to Advanced] (Coursera)

Why Neural Networks Are Powerful for Pattern Recognition

Neural networks are very good at identifying patterns in big, complicated datasets. Neural networks can automatically discover pertinent characteristics from raw data during training, but traditional algorithms frequently need explicitly created features.

They can do exceptionally well in tasks like recommendation systems, speech recognition, image classification, and natural language processing. The network continuously improves its internal settings as it processes more data, allowing it to spot ever-more-subtle patterns.

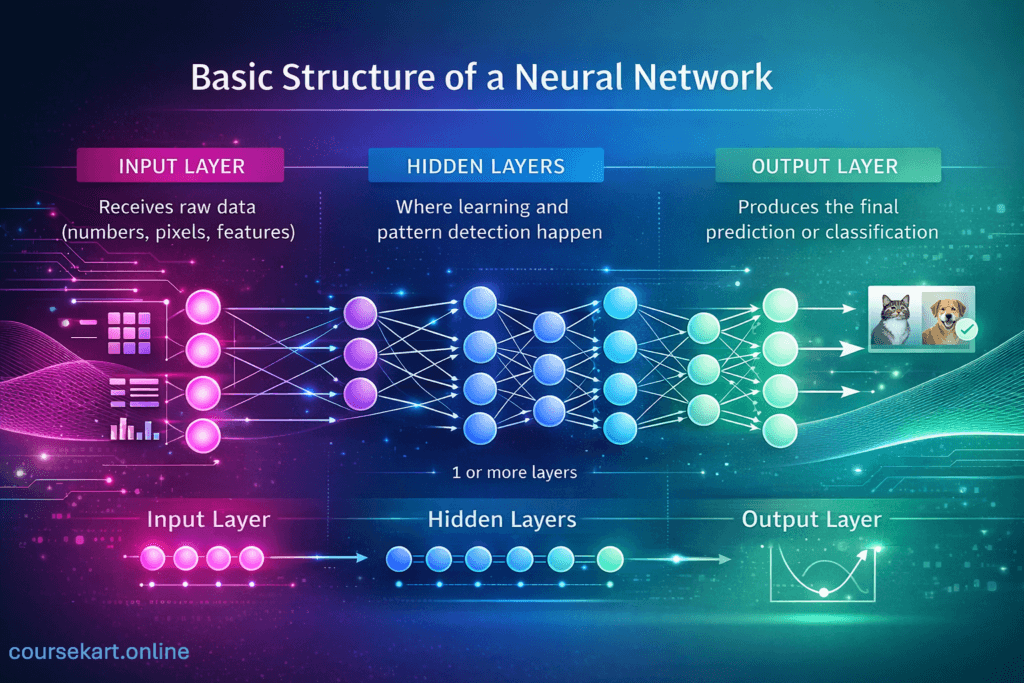

Basic Structure of a Neural Network

Understanding the fundamental architecture of neural networks can help explain how they work. Layers of interconnected neurons make up a neural network, which processes information sequentially.

By subtly altering the input data at each layer, the network may progressively identify relationships and patterns in the information.

The input layer, one or more hidden layers, and the output layer make up the three primary layers that make up the majority of neural networks. From the start of the network until the last prediction, data passes through these levels.

Input Layer

The input layer serves as the neural network’s first point of contact with data. Its function is to receive raw input values and forward them to the subsequent layer for processing.

The inputs typically represent numerical features extracted from a dataset. For example:

- In an image recognition task, each input might represent a pixel value from an image.

- In a house price prediction model, inputs could include features such as size, location, and number of rooms.

- In a text processing task, inputs may represent encoded words or tokens.

Each input value is forwarded to the neurons in the next layer, where it will be multiplied by weights and processed further.

Hidden Layers

The majority of learning occurs in the hidden layers. These layers carry out a number of mathematical operations on the incoming data, being positioned between the input and output layers.

After receiving signals from neurons in the preceding layer, each neuron in a hidden layer applies biases and weights before passing the outcome through an activation function. The network starts to identify significant patterns in the data as a result of this process.

For example, in an image classification model:

- Early hidden layers may detect simple features like edges or shapes.

- Deeper layers may recognize more complex patterns, such as objects or textures.

As data moves through multiple hidden layers, the neural network gradually builds a richer understanding of the underlying patterns.

Output Layer

The neural network’s output layer generates its final output. The model’s structure is determined by the kind of problem it is intended to solve.

For instance:

- In classification tasks, the output layer may produce probabilities for different categories (such as identifying whether an email is spam or not).

- In regression problems, it may output a single numerical value, such as predicting a house price or temperature.

The output layer essentially represents the network’s final prediction based on everything it has learned during training.

Together, the input layer, hidden layers, and output layer form the fundamental architecture that allows neural networks to process data, learn patterns, and generate predictions.

Also Read: Best Machine Learning Courses online

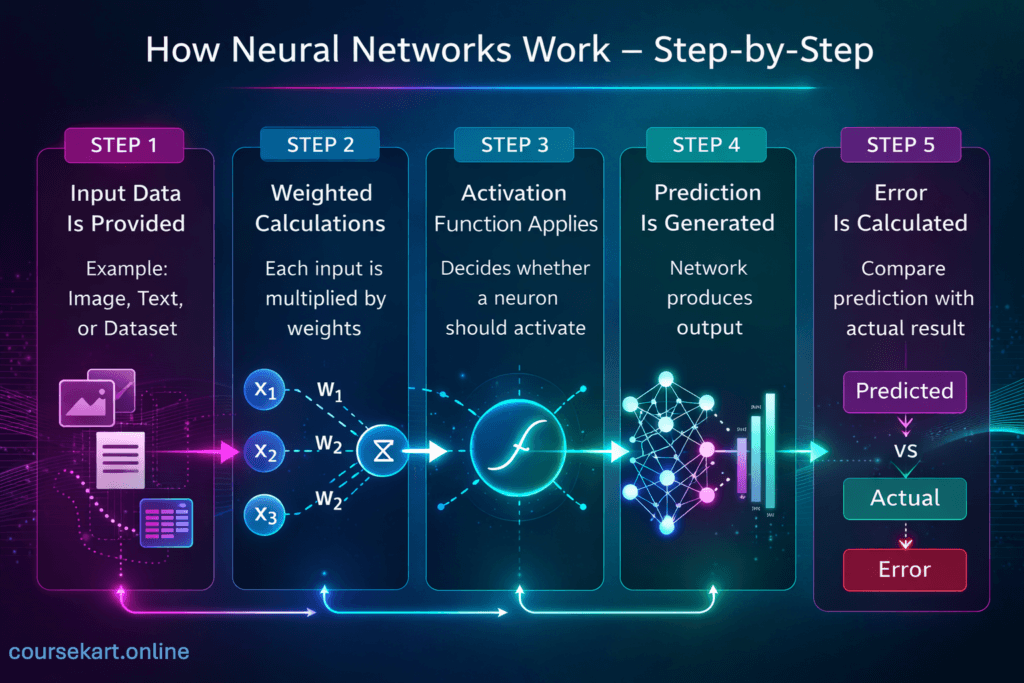

How Neural Networks Work – Step-by-Step

When the learning process is divided into a number of straightforward steps, it is much easier to comprehend how neural networks work.

A neural network, at its most basic level, receives input data, processes it using multiple layers of neurons, and then generates an output. The model assesses its prediction accuracy throughout training and modifies its internal parameters to enhance subsequent outcomes.

A condensed, step-by-step explanation of this procedure is provided below.

Step 1: Input Data Is Provided

Upon receiving input data, the neural network initiates the process. Usually, a dataset that was used to train the model provides this information. In order for the network to process each piece of information mathematically, it is transformed into numerical values.

For example:

- In image recognition, the input consists of pixel values from an image.

- In text analysis, words or sentences are converted into numerical representations.

- In predictive models, the inputs may include structured numerical features such as age, income, or temperature.

These input values are passed into the input layer and then forwarded to the next layer for computation.

Step 2: Weighted Calculations

Each input value is multiplied by a matching weight once the data has entered the network. The degree to which a specific input affects the neuron’s output is determined by these weights.

A neuron integrates its inputs mathematically by computing a weighted total. Higher weight inputs have a greater effect on the neuron’s output than lower weight inputs. The network continuously modifies these weights throughout training in order to better identify patterns in the data.

The neural network’s learning process is based on this weighted computation.

Step 3: Activation Function Applies

The neuron uses an activation function after calculating the weighted sum of the inputs. This function decides whether the neuron should fire and how strongly the signal should be transmitted to the subsequent layer.

By introducing non-linearity, activation functions enable neural networks to represent intricate relationships in data instead of just linear patterns. Neural networks would behave like simple linear models and lose most of their predictive potential in the absence of activation functions.

ReLU, sigmoid, and tanh are common activation functions that are intended to change the neuron’s output in various ways.

Step 4: Prediction Is Generated

The processed signals arrive at the output layer after passing through all of the hidden layers. Based on the calculations made across the network, the neural network now generates its final forecast.

For example:

- An image classifier may predict whether an image contains a cat or a dog.

- A recommendation system might predict which movie a user is most likely to watch next.

- A regression model may estimate a numerical value, such as the price of a house.

This output represents the model’s current understanding of the input data.

Step 5: Error Is Calculated

Once a prediction has been made, the neural network compares its output with the dataset’s actual correct outcome. The mistake or loss is defined as the difference between these two values.

The learning process’s objective is to reduce this error. In order to improve future prediction accuracy, optimization algorithms modify the network’s weights and biases during training.

Throughout training, this cycle, input processing, prediction, error computation, and adjustment, is repeated numerous times. The neural network progressively gains performance as it discovers the underlying patterns in the data.

Hands-On: Forward Pass in PyTorch

import torch

import torch.nn as nn

# Simple Neural Network Class (matches your layers explanation)

class SimpleNet(nn.Module):

def __init__(self):

super(SimpleNet, self).__init__()

self.fc1 = nn.Linear(3, 4) # Input layer (3 features) to hidden (4 neurons)

self.relu = nn.ReLU() # Activation function (Step 3)

self.fc2 = nn.Linear(4, 1) # Hidden to output (1 prediction)

def forward(self, x): # Forward pass (Steps 1-4)

x = self.fc1(x) # Weighted sum (Step 2)

x = self.relu(x) # Activation

x = self.fc2(x) # Final output (Step 4)

return x

# Instantiate and test

model = SimpleNet()

input_data = torch.tensor([1.0, 2.0, 3.0]) # Example: house size, rooms, location

output = model(input_data)

print("Input:", input_data)

print("Output prediction:", output.item())Also Read: Best Python Courses Online

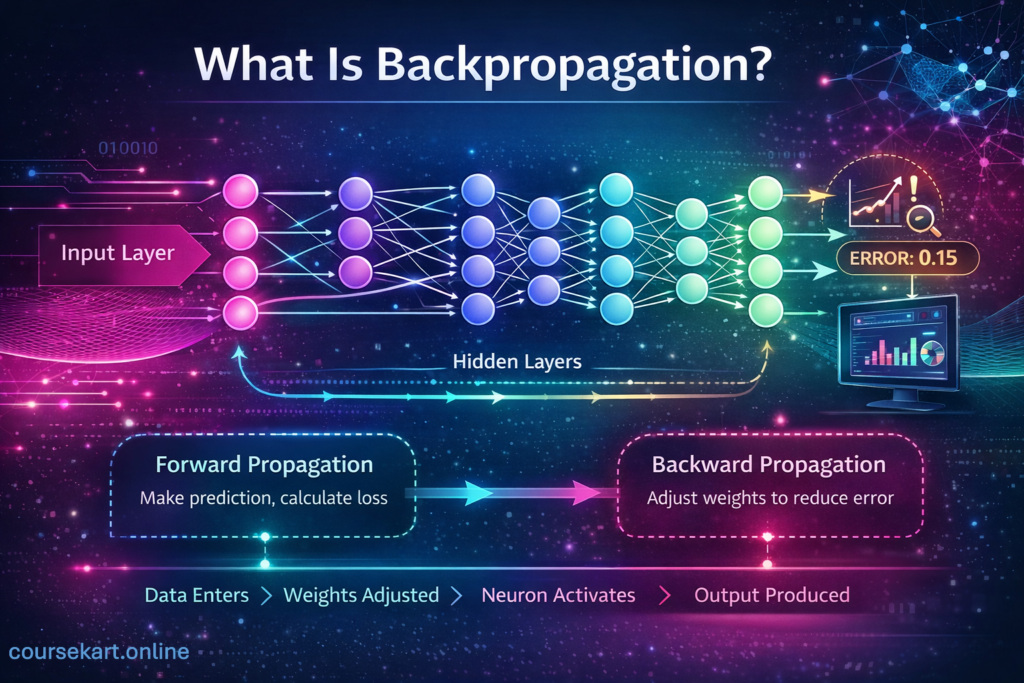

What Is Backpropagation?

A neural network still needs a method to learn from its errors, even though it can produce predictions through forward computations. This method of learning is known as backpropagation. Neural networks are trained using backpropagation, which is the fundamental technique that enables them to improve over time.

Backpropagation, to put it simply, involves transmitting the prediction error backward through the network and modifying the internal parameters to improve the accuracy of future predictions.

A Simple Explanation of Backpropagation

Following the production of an output by a neural network, the model contrasts that prediction with the actual correct value from the training set. The loss or error is the difference between the two.

Backpropagation determines the relative contributions of each neuron and network connection to that mistake. The method assesses each weight and neuron’s impact across all levels rather than treating the network as a single block.

The network determines which parameters require change by propagating the error from the output layer back toward the preceding levels. The neural network can progressively improve its information processing through this feedback mechanism.

Neural Networks vs Traditional Machine Learning

Neural networks and conventional machine learning models are both made to identify patterns in data and provide predictions.

On the other hand, their approaches to data processing, pattern recognition, and problem-solving are very different. Knowing these distinctions enables data science students to select the best strategy for a particular assignment.

Feature Engineering vs Automatic Feature Learning

One of the biggest differences lies in how features are handled.

Feature engineering is essential in conventional machine learning techniques like logistic regression, decision trees, and support vector machines.

To help the model find patterns, data scientists must manually create and choose significant characteristics. For instance, engineers might specifically design variables like word frequency, number of links, or email length in an email spam classifier.

On the other hand, automatic feature learning is possible using neural networks. During training, the network learns valuable representations directly from raw data rather than significantly depending on manually created features.

For example, a neural network’s hidden layers enable it to automatically learn elements like edges, textures, and object forms in image identification. One of the main reasons neural networks perform well on challenging tasks is their capacity to learn hierarchical representations.

Also Read: How to Do Feature Engineering in Machine Learning: Step-by-Step Tips for Better Results

Complexity and Scalability

Conventional machine learning methods are frequently easier to understand and more straightforward. They usually require fewer parameters and can be trained more quickly on smaller datasets.

They are frequently employed in structured data problems, including credit scoring, fraud detection, and sales forecasting, due to their simplicity.

In general, neural networks are more complicated and computationally demanding. Deep neural networks frequently rely on GPUs or other specialized hardware because they might have millions of parameters and demand a lot of computing power.

But because of their complexity, they are able to handle extremely unstructured data, including natural language, audio, and images.

Neural networks typically perform better in terms of scalability as the number and complexity of data rise.

Deep learning models may identify incredibly complex patterns that regular models might find difficult to understand if they have access to sufficient data and processing power.

Data Requirements

Another important distinction is the amount of data required for effective learning.

If the features are appropriately designed, traditional machine learning techniques can frequently work effectively with smaller datasets. These models continue to be quite successful in numerous real-world situations with little data.

Deep learning models, in particular, usually need a lot of training data. They require large datasets in order to discover significant patterns and prevent overfitting because they have numerous parameters.

For this reason, neural networks are frequently utilized in fields like image recognition, audio processing, and recommendation systems where large datasets are accessible.

Also Read: What is Data Science? A Beginner’s Guide to This Thriving Field

Real-World Applications of Neural Networks

Numerous technologies that people use on a daily basis are powered by neural networks, which are more than just theoretical models covered in textbooks.

They are particularly helpful in domains involving complicated data, such as voice, images, and user behavior, because of their capacity to extract patterns from massive datasets.

Knowing these practical uses helps data science students comprehend why neural networks are now a key part of contemporary artificial intelligence.

Image Recognition

Image recognition is one of neural networks’ most well-known uses. By analyzing visual input, neural networks are able to recognize faces, objects, and scenes in pictures.

Social media sites, for instance, automatically tag users in pictures, and smartphone cameras are able to identify objects like food, pets, or scenery. These features depend on deep neural networks, specifically convolutional neural networks (CNNs), which use millions of training images to identify visual patterns.

Additionally, security systems, manufacturing quality control, and medical imaging all use image recognition.

Speech Recognition

Speech recognition technology has significantly advanced thanks to neural networks. Contemporary voice assistants are capable of comprehending spoken language, translating speech into text, and providing pertinent information in response.

Neural networks are employed in systems that analyze audio signals, recognize phonetic patterns, and interpret language in virtual assistants, transcription tools, and smart gadgets. Neural networks are able to adjust to various accents, speaking styles, and background noise levels because they can learn from enormous speech datasets.

Autonomous Vehicles

Another field where neural networks are essential is self-driving technology. For autonomous cars to operate safely, they must constantly assess their environment.

To detect items like people, traffic signs, and other cars, neural networks analyze data from cameras, radar sensors, and lidar devices.

These systems can identify trends on the road and make decisions about steering, braking, and navigation in real time by learning from large driving datasets.

Neural networks continue to be a crucial component of these developments, even though fully autonomous driving is still in its infancy.

Medical Diagnosis

Neural networks are being utilized more and more in the medical field to help doctors diagnose patients. Neural networks can assist in the early detection of diseases by examining patient data and medical imaging.

Deep learning algorithms, for example, can analyze CT, MRI, or X-ray images to find patterns linked to ailments like lung disorders or malignancies.

Instead of taking the position of medical personnel, these technologies serve as decision-support tools that increase the efficiency and accuracy of diagnosis.

Recommendation Systems

Neural networks are used in recommendation systems on a lot of websites. Digital platforms and streaming services examine user behavior to make tailored content recommendations.

Platforms like Netflix and YouTube, for instance, look at things like user ratings, engagement trends, and viewing history. In order to suggest movies, videos, or goods that suit personal tastes, neural networks then determine the connections between users and content.

These recommendation systems show how neural networks are capable of learning intricate behavioral patterns and providing extremely customized experiences.

FAQs About Neural Networks

What is the difference between machine learning and neural networks?

Machine learning is a broad field of artificial intelligence that focuses on algorithms capable of learning patterns from data. Neural networks are a specific type of machine learning model inspired by the structure of the human brain. While traditional machine learning models rely heavily on manual feature engineering, neural networks can automatically learn complex patterns from raw data, especially in areas such as images, speech, and natural language.

Are neural networks hard to learn?

Neural networks can seem complex at first because they involve concepts such as linear algebra, optimization, and deep learning architectures. However, the basic principles, inputs, weights, activation functions, and learning through error correction are relatively straightforward.

Do neural networks require coding?

In most practical applications, working with neural networks does require some level of coding. Data scientists typically use programming languages to build, train, and evaluate models. However, modern deep learning frameworks simplify the process significantly. Libraries such as TensorFlow, PyTorch, and Keras provide high-level tools that allow developers to implement neural networks with relatively concise code.

What programming language is best for neural networks?

Python is widely considered the best programming language for neural networks and deep learning. It has a large ecosystem of libraries and frameworks specifically designed for machine learning, including TensorFlow, PyTorch, and Keras.

Conclusion

Neural networks are now among the most effective technologies in data science and artificial intelligence.

Fundamentally, they learn by applying weighted computations, creating predictions, digesting incoming data through interconnected layers of neurons, and progressively improving through feedback mechanisms like gradient descent and backpropagation.

Neural networks improve their internal parameters through repeated training and data exposure, making them more adept at identifying patterns and producing precise predictions.

Neural networks are extremely useful in many different industries because of their capacity to automatically understand intricate relationships from data.

Neural networks power many of the cognitive technologies that form today’s digital world, from speech recognition and image recognition to recommendation systems and medical diagnostics.

Datasets will play an increasingly important role in solving complicated problems as computing power increases and datasets continue to rise.

Learning how neural networks work is a crucial first step for data science students in comprehending contemporary AI systems. Studying the theory and conducting experiments with actual models are the greatest ways to get this expertise.

You can progressively gain hands-on expertise with deep learning by investigating frameworks like TensorFlow, PyTorch, or Keras, working with datasets, and creating tiny projects.

Although neural networks may appear complicated at first, they become much more approachable with constant study and experimentation, and mastering them can lead to some of the most fascinating opportunities in artificial intelligence and data science.

Share Now

More Articles

Best Agentic AI Courses Online [Build Job-Ready AI Agent Skills]

Best Cloud AI Courses Online [Top Certifications]

Best PyTorch Courses Online to Master Deep Learning and AI [Practical PyTorch Learning]

Discover more from coursekart.online

Subscribe to get the latest posts sent to your email.